I used to think that the path to the metaverse started with screen-based virtual worlds then expanded to virtual reality. At some point, I thought, we’d all be doing everything in the metaverse. The same way that the Internet made information instantly accessible to everyone everywhere, the metaverse would do the same with experiences and human interactions.

I spent over a decade of trying to make it happen for myself and my team. We had an in-world office. I started a group for hypergrid entrepreneurs that met in OpenSim. I am on the OpenSim Community Conference organizing team, and our early meetings are in OpenSim. I even figured out a way to get my desktop and many of my apps into OpenSim, so that I could work in my virtual office.

Spoiler: I did not, in fact, ever do any significant amount of work in my virtual office.

I still think it’s possible. Well, theoretically possible, at least.

Eventually. But not in the immediate future, and not with the technology we have today.

First, until the resolution of a virtual world is as good as real life, there will be an advantage to working the old-fashioned way, especially when you’re in a graphics-heavy profession. I’m not an artist, but I do create graphics to go with blog articles and social media posts. And I’m the one responsible for web design for several outlets, including Hypergrid Business, MetaStellar, Writer vs AI, and Women in VR. That’s hard to do on a screen in a virtual world.

And don’t even get me started on trying to work in virtual reality. Even typing is hard if you can’t see the keyboard. I touch type, but sometimes I have to type special characters. I never remember where any of them are. In addition, I multi-task. I have several windows open at once and am cutting-and-pasting between them, looking things up, using calculators and other tools, and, of course, checking my phone. I can’t do most of that in virtual reality, even with a pass-through camera. And if I’m just going to be sitting at my computer, typing, why am I in a virtual reality headset, anyway?

But AR — augmented reality — well, that’s something entirely different. Instead of replacing the entire world around you with a virtual one, augmented reality just adds a little bit of the virtual to the actual world around you. Instead of looking out at the world through a distorting pass-through camera, you see the world as it is.

What this means is that, instead of a Zoom call, I can see the person I’m talking to sitting in front of me as a basically a hologram. Well, they’d be projected on my glasses, but to me it would look as if they were actually there. Instead of a screen full of little Zoom faces, I could see people sitting around a conference table. I’d have to rearrange my home office so that my layout would work for this, but I had to rearrange my office anyway, so that it would look good on Zoom.

The thing is, we’re probably going to get to AR through our phones. Instead of wearing a smart watch, we’d wear smart glasses and just keep our phones in our pockets. Until the phones got so small, of course, that they’d fit completely inside the glasses frames.

The home screen of my phone is currently — blessedly! — ad-free, so I don’t expect to see pop-up ads just showing up willy-nilly in augmented reality, either. If they did, nobody would use the platform. Instead, we’d probably see ads the same places we currently see them — when we play free games and scroll through news feeds.

I can see some very interesting things happening when we get AR glasses. We’d use virtual keyboards instead of physical ones, and probably dictate quite a bit more, too.

I do like the physical feel of a tactile keyboard, but we already have Bluetooth-enabled keyboards that sync to our mobile devices, so I can easily see continuing to use one, if I prefer.

But I can also see myself dictating more.

Speech detection is getting more accurate all the time. In fact, I’m already dictating most of my text messages because it’s so convenient. And instead of physical screens, we’d get virtual screens that float at an arm’s length in front of us, and we can position them where we want them, and have as many screens as we want, of any size. I only have a couple of apps that I use that don’t run on a phone — GiMP and Filemaker. Everything else I do, including word processing, is browser-based, so I can already do it on a phone.

An AR phone will make my desktop PC, monitors and keyboards and backup laptop obsolete. Well, I’d still keep my laptop, just in case, but my other hardware will go the way of all the other devices that smartphones relegated to the trash heap of history. And, also, to literal trash heaps. And Windows. I hate Windows, and will be happy to never use it again.

Since these AR smart glasses will be so convenient, everyone will be using them for everything. We’ll be living in a world that has a continuous virtual overlay on it, a magical plane that gives us superpowers.

Oh, and our AI-powered virtual assistants who are as smart as we are, or even smarter, will live inside this virtual overlay.

All the pieces are already there — including the intelligent AI. All it will take is for someone to put them together into an actually useful device.

I’m guessing that this will be Apple. When it happens, I’ll be switching back from Android the first chance I get. I originally had an iPhone, but switched to Samsung when Gear VR came out because Apple didn’t support VR. Then I switched to the Pixel because I hated Samsung so much, and because I liked Google’s Daydream VR platform.

Both Gear VR and Daydream are now gone, though Google Cardboard remains. I still see between 3,000 and 4,000 pageviews a month on my Google Cardboard headset QR Codes page. These are the codes that people use to calibrate their Google Cardboard-compatible headsets. They’re ridiculously bad, and have limited motion tracking, but as phone screens get better, the image quality has become pretty good — good enough to watch movies on a virtual screen, and, of course, for VR porn. Ya gotta admit, porn does drive technology adoption. I’ve heard.

But the phone-screen-based approach seems to be hitting a head end, since few people want to have a huge phone screen strapped to the front of their face.

I’ve been waiting for years for Apple to do something in this space.

This might now be happening.

Here’s a quote from Apple CEO Tim Cook, in a recent interview with GQ:

“If you think about the technology itself with augmented reality, just to take one side of the AR/VR piece, the idea that you could overlay the physical world with things from the digital world could greatly enhance people’s communication, people’s connection,” Cook says. “It could empower people to achieve things they couldn’t achieve before. We might be able to collaborate on something much easier if we were sitting here brainstorming about it and all of a sudden we could pull up something digitally and both see it and begin to collaborate on it and create with it. And so it’s the idea that there is this environment that may be even better than just the real world—to overlay the virtual world on top of it might be an even better world. And so this is exciting. If it could accelerate creativity, if it could just help you do things that you do all day long and you didn’t really think about doing them in a different way.”

He didn’t deny or confirm the release of an Apple AR headset.

But, yesterday, Bloomberg reported that Apple is getting ready to unveil its augmented reality headset this June, and is already working on dedicated apps, including sports, gaming, wellness, and collaboration.

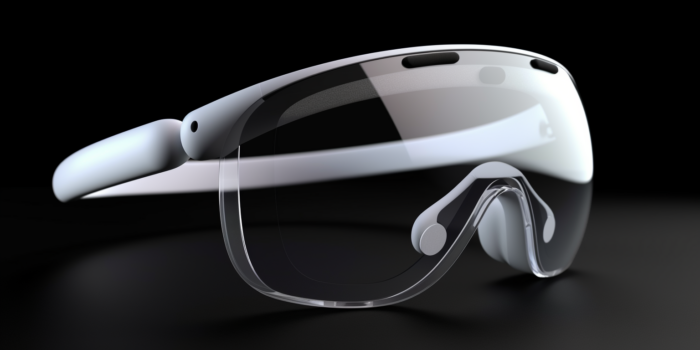

Here’s what an AI thinks that the new Apple headset might look like:

I do love my Pixel, and I’d have to replace all my Android apps with new iPhone ones if I switched, but if we’re about to hit an iPhone moment with augmented reality, I want to be first in line.

- International singers gather on Alternate Metaverse Grid for first annual International Day - April 15, 2024

- OpenSim hits new land, user highs - April 15, 2024

- Wolf Territories rolls out speech-to-text to help the hearing impaired - April 15, 2024