Nvidia, which recently announced its Iray VR and Iray VR Lite to enable photorealistic panoramas and light-rich visuals, walkthroughs and scenes in 3D virtual spaces on personal computers and mobile devices, will start to support Iray VR Lite as a standard feature set on its Maya, Cinema4D, Rhino, and 3Ds Max Iray plug-in products from June this year.

The company demonstrated its Iray VR products at the GPU Technology Conference held in San Jose this April, but Phillip Miller, the company’s senior director of advanced rendering products, told Hypergrid Business that what was demonstrated just marks a company’s debut into virtual reality support on its Iray products.

“First, Iray VR is really our term for a new core emphasis within our Iray platform — standing alongside physically based accuracy, fast ray tracing, exchangeable materials, and incredible scaling,” he said. “So virtual reality will be a continuum for Iray, and what was shown at GPU Technology Conference are some of the first steps there.”

The support for static panoramics on Iray VR Lite — the mobile version — will allow all Nvidia’s applications to produce virtual reality content that can be used on virtual reality headsets, said Miller.

“This is done through new Iray VR Camera types for spherical, cylindrical and box with the option to make them stereo,” said Miller. “This will enable all Iray applications to produce virtual reality content for use in any virtual reality viewer or device — Cardboard, Vive, etcetera.”

Breakthrough

The Iray VR technology is a breakthrough for virtual reality scene illumination since rendering of realistic frames in real time in virtual reality has been difficult to achieve — leave alone getting the technology to the point of matching the demand of 90 frames per second renderings from desktop-class virtual reality headsets. In addition, with the new technology, designers no longer need knowledge in computer graphics to create photorealistic designs.

With interactive ray tracing capability and ability to represent materials more accurately in virtual reality, the platform will bring more accuracy and reality into virtual reality projects and be very helpful for car designers, lighting designers, designers of architectural buildings, among others. That alone could make virtual reality become more useful for real world industrial applications.

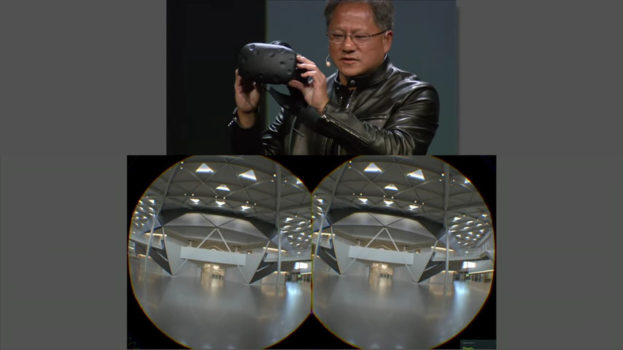

Miller talked of the recent demonstration of the technology at the GPU Technology Conference where the company CEO Jen-Hsun Huang demonstrated the Iray VR by showing company’s not-yet-built future office rendered with the system.

“The crowd reaction to this level of interactive realism was considerable, as the presence gained here is stunning and you really do feel like you are there,” said Miller.

See a demo below given during the GPU Technology Conference.

How it works

The plugin works by creating probes or lightfields, which are volumetric areas containing information about light that is traveling through a given space. The technology actually follows photons of light as they travel around the room and bounce off objects, picking up the different resulting wavelengths.

Hundreds of probes are used to produce the final effect. These probes are mixed, filtered and processed. The technology samples a probe depending on the viewer’s position, and generates an appropriate view for the user. This enables the user to see photorealistic renders in real time. The engine is then integrated into head mounted displays.

However, full Iray VR will require a personal computer with a high-end graphics card, and the company is not yet announcing when the technology will come to market, said Miller.

“Playback for Iray light fields requires a personal computer with a flagship graphics processing unit,” said Miller. “At GTC we were viewing this with the Vive. For GTC, this was a technology demo, and we’re not yet announcing how this will come to market.”

- OpenSim active users hit all-time-high for the holidays - December 15, 2023

- OpenSim user activity ramping up for the holidays - November 15, 2023

- OpenSim land area at a new high as grids prep for holidays - October 15, 2023